|

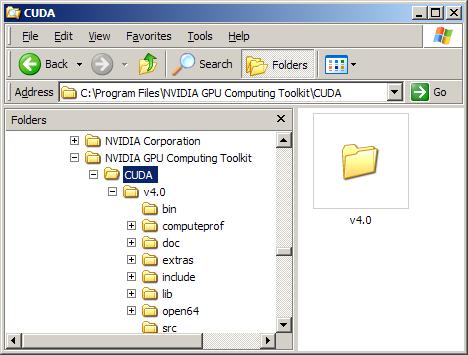

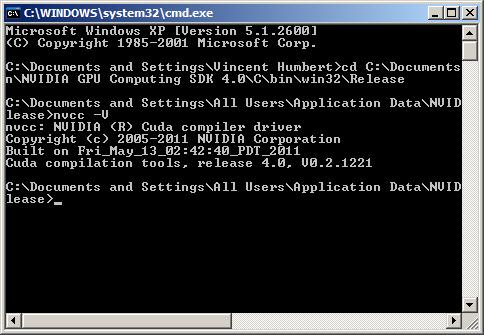

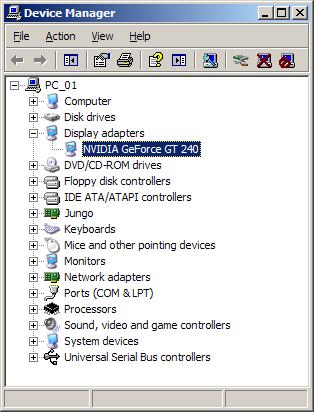

NVIDIA CUDA C Installation and Verification on Linux Systems. Test your installation by compiling and running one of the. Before continuing, it is important to verify that the CUDA programs can find. An Introduction to Debugging CUDA-x86 Applications. Compiling and Running for CUDA-x86. Compile and Run CUDA C/C++ Programs. Kernels can be written using the CUDA instruction set architecture. NVIDIA CUDA GETTING STARTED GUIDE. Compiling CUDA Programs. NVIDIA CUDA Getting Started Guide for Microsoft Windows. For compiling CUDA code. On Big Red II, how do I use CUDA? Compiling and running the sample code. CUDA C programs are compiled with with the nvcc compiler, which expects CUDA C programs to have a.cu extension. Compiling and linking GPU- programs. Compiling & linking GPU- programs. Introduction. In order to make application runnable on GPUs one has to use CUDA programming language or Open. ACC directive based approach. The main idea is to create a program that usually runs in a normal way on host CPU(s), but rather slowly. A lesson on compiling and running a simple program. Lab 2: Compiling and running CUDA programs; Labs 3&4: Parallel reduce Labs 5&6: Averages; Sign in To speed the program up, GPUs need to be brought into action. This is accomplished by adding CUDA C++ code (sometimes even CUDA Fortran in case PGI compiler is used) or by inserting #pragma acc or $! GPU. 6. 4. 2 CUDA approach. Here is an example of CUDA- program that calculates famous AXPY vector operation in double precision. The program ( daxpy. K4. 0 supports compute capability 3. K8. 0 supports compute capability 3. Please note that we are using NVIDIAs CUDA C/C++ compiler front- end . To figure out which libraries get linked, use ldd- command against your GPU- executable.

Open. ACC approach. It is often easier to use Open. ACC directives rather than CUDA language. But then you need PGI compiler in order to interpret your Open. ACC directives. These can be embedded into C/C++ codes or Fortran codes. Open. ACC resembles very much Open. MP directives and is often a good starting point in moving part of your code to use GPU accelerators. One beauty of this approach is that you can move your application gradually to accelerators, and there is no need to learn CUDA. For CUDA programmers there is also a good news: you can interoperate your existing CUDA code with Open. ACC. So, there is no need to throw away a well functioning CUDA kernels – just invoke them (usually via wrapper) from within Open. ACC region. It is said that CUDA codes still perform 1. Open. ACC constructs, but this gap is clearly narrowing with newer releases of Open. ACC compilers. The corresponding DAXPY- code written in Open. ACC is rather simple. We provide both C/C++ and Fortran versions. C/C++ version of Open. ACC DAXPYHere is the C/C++ - version of our Open. ACC DAXPY ( daxpy. They in fact dictate how, where and when your data will be used on GPUs. To compile & link the application, the PGI environment must be used. Unless you have strict requirement for using C++ compiler (pgc++), it is often more convenient to deploy PGI's C- compiler with c. O3 - Minfo=all - acc - ta=tesla: cc. Here we only target compute capability 3. PGI does not have special support for 3. The code will still also work on K8. Since we asked for compiler info via - Minfo=all, we will be rewarded by a rather exhaustive listing of messages. In this case they reveal how well we succeeded in our attempts of making our GPU busy – at least in theory. Currently Loaded Modules. O3 - Minfo=all - acc - ta=tesla: cc.

Generating present(x! It's recommended to perform one- off initialization of GPU- devices before any Open.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

December 2016

Categories |

RSS Feed

RSS Feed